The Sovereign

AI Command Center

Stop chatting with AI. Start commanding a project-aware workforce. Stack your keys, automate your labor, and build a persistent Project Brain.

Stop chatting with AI. Start commanding a project-aware workforce. Stack your keys, automate your labor, and build a persistent Project Brain.

Stop jumping between ChatGPT and Claude tabs. Linear chat histories forget your project's decisions. HQ remembers everything.

Don't wait for your single subscription to reset. Stack your keys and let HQ rotate them automatically to keep you in flow.

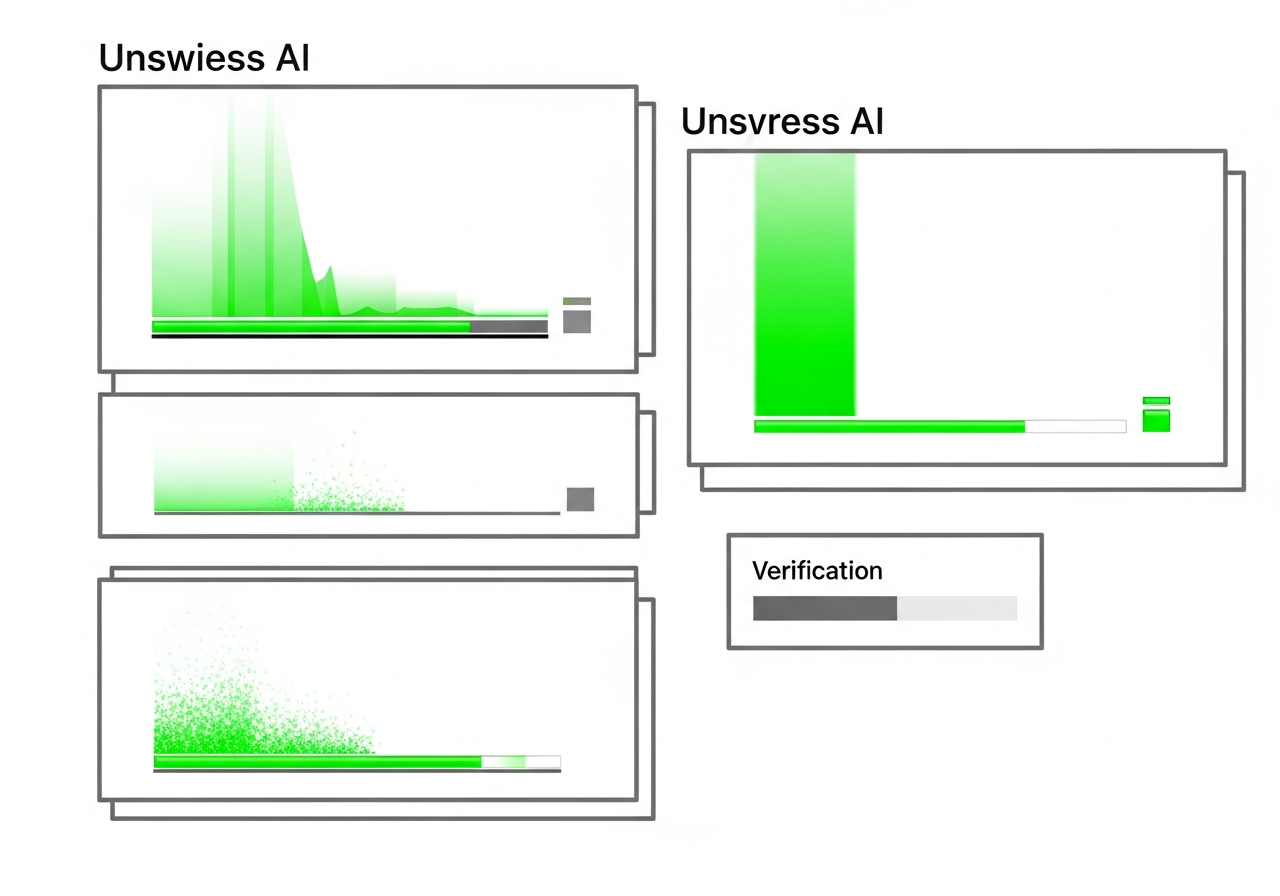

Quit overpaying for simple prompts on premium models. Smart Routing saves you 90% by picking the right model for the job.

Connect Groq, OpenAI, Anthropic, or local Ollama instances. HQ turns them into a unified, high-performance pool.

Run /ship or /intel to trigger parallel agents that research, architect, and code while you stay focused.

Use /decide to filter chat noise into a permanent Project Brain that feeds back into future messages.

Our Smart Routing engine picks Llama-3 for simple tasks, only escalating to premium models when the complexity demands it.

Kick off research, drafting, and coding simultaneously. HQ orchestrates the cross-agent handoffs for you.

Self-host on your own hardware or private cloud. Your keys and your data are never used to train someone else's model.

HQ is built for the users who hit 429s for breakfast and demand privacy for their intellectual property.

Because ChatGPT doesn't have project-level memory or multi-provider routing. HQ allows you to stack unlimited keys and maintain separate context brains for every project.

Absolutely. HQ is self-hosted. Your API keys are encrypted at rest and your project data never leaves your infrastructure.

Yes. Switch model providers per-message or set up Smart Routing to handle it automatically based on prompt complexity and latency.

It's a structured repository of decisions and context that HQ extracts from your chats. It ensures the AI always knows your tech stack and goals.

We are accepting only 100 Beta slots for the first wave of Account Stackers. Join the waitlist to reserve your instance.

Plus: Get the 'Account Stacker' setup guide upon joining.